On May 26th, for the first time, Twitter fact-checked a politician’s public statement.

Let’s look at what this means, and what it tells us about modern communications and how we manage them. This is not a political post, and I will not engage with the substance of the political statements, but we’ll start by looking at a Donald Trump tweet because it’s a great example of many dynamics coming to a head.

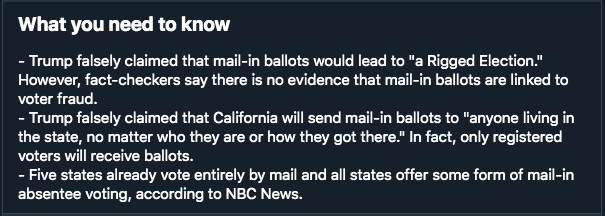

When users select the option to “Get the facts about mail-in ballots”, they saw:

This will spur a lot of questions for people. How did this get here? Does it make sense to have it? Why hasn’t it always been there? What’s going on? To answer these, we need to back up and look at what social media is and how we think about it.

Is Social Media a Communication Network, or a Media Source?

Under the old economy we thought about communications in two categories.

- A communication network or “common carrier” like a phone network or mail system, that just passes information back and forth. It is not responsible for what’s said on it.

- A media company is a source that’s edited and curated, like a newspaper or TV channel that filters content, and so is responsible for its content.

Who Controls Content and Who is Responsible?

Suppose someone a crime like fraud through mail or telephone. The telephone company is not responsible. Suppose the same fraud happens in a newspaper. The newspaper is clearly responsible. The difference is that the newspaper’s responsibility derives from the control they have over the content.

A central question we’re wrestling with in 2020 is whether social media is a communication network or a media source. And it’s tough, because the answer is clearly both.

- It’s a communication network because billions of people sign up to conduct private & public conversations not under the control of the network

- It’s a media source because it is edited and curated (trending hashtags, featured stories, the order that the algorithm presents the content to you in, and fact check warnings such as what was added to President Trump’s tweet)

To determine who is responsible, we have to look at who controls the content on social media. It is apparent that the answer is both users & the platform, and so it is apparent that the responsibility question has to be resolved with “both are responsible”. This makes the “old way of thinking about media” not very useful, because suddenly our categories don’t really tell us much here.

What’s Really Different Here?

New media allows the common person to have global reach. Back in the old economy, any person could say whatever they liked via the telephone, but they couldn’t amplify their reach to millions or billions of other people, they needed a newspaper or a book to do that, and access was gated to those publishing sources by “responsible parties” (newspapers, publishers).

Everyone is a broadcaster.

It’s as though everyone has a megaphone that costs $0 that can be heard around the world. This in turn destroys the “gating effect” that newspapers and publishers once had, because they aren’t needed for reach any more. And since they aren’t needed, it is unrealistic to place all responsibility on social media companies. They cannot gate content for technical reasons, and market reasons.

- Technical: To accurately police statements from a billion people is not feasible, even with advanced technology and AI.

- Market: If the platforms did police the statements, users can easily move elsewhere where they aren’t policed. Creating new services is easy in 2020, which is why there are dozens of social networks.

Not a Problem to be Solved: A Situation to be Managed

Coming back to Donald Trump’s tweet, we have a situation where Twitter cannot control his content, and they also arguably cannot let factually incorrect statements stand. This is the crux of the “shared responsibility” burden. So they chose to contextualize the tweet and link to another source of information. What might this look like going forward?

While we can’t make decisions on all content automatically, certain egregious content can be automatically flagged and removed, and that’s here to stay. There will always be a very large segment of content that will have no intervention and oversight whatsoever.

It’s the grey middle that needs work, and over the past few years we’ve seen the gradual evolution of policies to deal with this.

What Tools Do We Have in our Toolbox?

Here’s how we manage the problem today, which will continue.

- Terms of Service, which are intentionally vague to give discretion to the company to remove borderline content

- Contextualization, or “extra facts presented alongside” (such as the original Donald Trump tweet)

- Warnings and Bans, or things like “point systems” which eject users for repeated violations; three strikes and you’re out.

- Social punishment. User group reactions to your content, which give social feedback on the acceptability of what you’re doing.

It’s important to understand that any of these tactics can be overdone and produce tyranny or imbalances of power. For example, a platform can game the terms of service to eject speech it doesn’t agree with, or can ban someone who doesn’t deserve it. Social punishment can turn into angry mobs. So it can all go wrong, clearly. But that reality doesn’t undo the necessity of having and deploying the tactics as part of a shared responsibility model.

New Dynamics

- Because of the shared responsibility model, you will have an permanent fight between content creators and platforms over who has which power and who is allowed to do what. This is fundamental to a shared responsibility model. Note that this is arguably much better for content producers than they’ve ever had it, since under the old economy they were always at the whim of editors. So take the user’s complaints with a grain of salt, they are quite ascendant in the last 20 years in terms of their power.

- The meaning of freedom of speech is going to become blurry. People love to appeal to this freedom online, but few actually understand how it is put together. The freedom prevents the government from silencing you, but Twitter silencing you is still completely OK and you have no protection against that. Centralization of communication via company-owned platforms allows the spigots of speech on certain topics to be turned off in a small number of places, and there is no functional recourse against this at the present, without a new legal tradition.

- Information will continue to fracture. What I mean by this is that thousands of tiny community bubbles will continue to form for special communication on narrow topics. Multiple little “wild wests” will be spawned, and the overall conversation will get noisier and more complex in aggregate. It will not re-centralize as it was under the old economy, where you could know most of what was going on and what people were thinking by reading 2-3 major newspapers.